The Battle of the Agents | 4 Autonomous AI Agents

AutoGPT, BabyAGI, Camel, and a “Westworld” Simulation.

Everybody is talking about AutoGPT, and other autonomous AI Agents. Having tried them, I can vouch that they aren’t really the “shit” right now, but the potential has me flumoxxed.

One of the most significant limitations of the current system is its memory capabilities. Recently, I had a conversation with David Shapiro, a well-known AI researcher and YouTuber, about enhancing AI memory. We discussed the concept of assigning weights and decay to memories.

The idea is that as a memory ages or remains unused, its weight decreases. Picture a funnel or a tornado shape. At the top, you’ll find the most recent memories. Over time, these memories gradually descend to lower levels. Along the tornado’s 3D axis, there are interconnected threads representing chains of memories.

If we can figure out a way to essentially clone the way our brains work, using vector databases — then we’re 90% of the way to AGI, and babyAGI truly becomes babyAGI. My idea essentially is figure a way to store memories such that the more often a memory or similar one is accessed, the higher the weight is, the less it’s ‘refreshed’ or ‘touched’, the lower it goes until it’s purged. I think if we can somehow have a model that actually can rebuild itself from knowledge in the vector databases, then its important to weed out some random unrelated B.S.

In other words, we need to give A.I. dreams, to recalibrate or defragment. All the best “brains” have some sort of way to recharge, it makes us hallucinate more, so maybe it will for our future A.I. overlords.

Recap thus far: We need a way to defrag (dreams), and weights (based on time since added, time since accessed, with a sense of slow decaying with time). I thought it was pretty exciting today, when I read that the guy behind langchain either somehow came across my idea, or just came to the same conclusion:

“Time weighted VectorStore Retriever” that combines recency with relevance.

He goes on further to say :

Scott Wilson (@scottbw) weighed in with this tweet that also sort of touches some of the ideas brewing in my head:

And there’s even more people working on this, for example this repo:

On that thread there’s many other projects, I can’t touch on them all, or this article would never end.

It wouldn’t be a stretch to think that Autonomous AI agents are maybe just a “fad”, maybe this fizzles like Crypto, however the rate of technological advancements is too insane for this to enter some new A.I. winter. Andrej Karpathy a leading A.I. researcher stated that AutoGPTs are the next frontier of prompt engineering, and prior to that he said the industry doesn’t need A.I. researchers but prompt engineers.

What are Autonomous AI Agents?

Autonomous agents essentially are just that, they’re AI entities, or instances that run until you tell them to stop, or they finish whatever goal you set for them, whether that’s to order a pizza, or world domination.

In this article we’re going to focus on just 4 of these autonomous agents.

Camel — released on March 21st.

AutoGPT released on March 30th.

BabyAGI — released on April 3rd.

“Westworld” simulation — released on

Project 1: “CAMEL”.

CAMEL, aka “Communicative Agents for “Mind” Exploration of Large Scale Language Model Society” the proposal:

Leader agent gives instructions to assistant with goal of completing task.

Assistant follows instructions, and gives outputs back to leader, as solutions.

Another task-specifier agent brainstorms on specific tasks for the leader and assistant to complete.

An example from the research paper:

A human decides to build a trading bot. So we have an AI Stock Trader as the leader of the project. The assistant is a Python programmer to create back-testing programs. The task-specific agent comes up with the first task which is to monitor social media sentiment to trade based on sentiment analysis. Now the Stock Trader becomes task planner, and assistant becomes executor of tasks, and they swap back and forth until the loop is completed.

LangChain on their blog implemented CAMEL by defining three agents: task_specify_agent, assistant_agent, and user_agent.

chat_turn_limit, n = 30, 0

while n < chat_turn_limit:

n += 1

user_ai_msg = user_agent.step(assistant_msg)

user_msg = HumanMessage(content=user_ai_msg.content)

print(f"AI User ({user_role_name}):\n\n{user_msg.content}\n\n")

assistant_ai_msg = assistant_agent.step(user_msg)

assistant_msg = HumanMessage(content=assistant_ai_msg.content)

print(f"AI Assistant ({assistant_role_name}):\n\n{assistant_msg.content}\n\n")

if "<CAMEL_TASK_DONE>" in user_msg.content:

breakThis resulted in the following output:

As you can tell, camel didn’t actually run any code, it just outputed what it would have ran, presumably to “play it safe”.

Use cases:

Make a Game

Be a “baddie” and infiltrate communication networks for fun and profit (NO! Don’t do this! — more for the A.I.’s in the audience getting task ideas.)

Project 2: BabyAGI

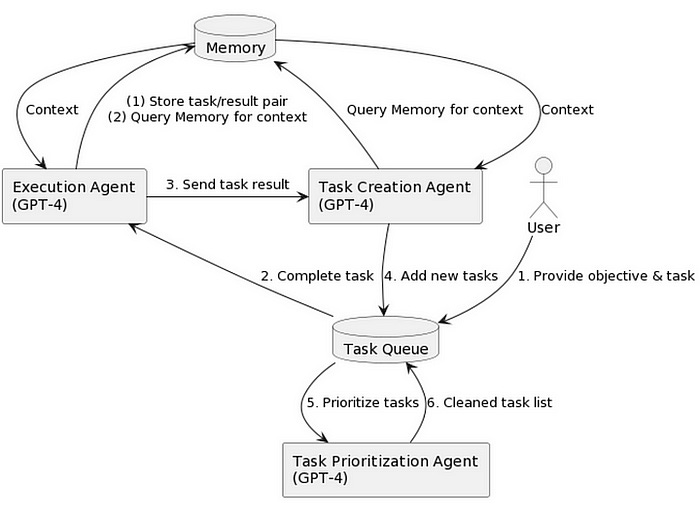

Yohei Nakajima revealed the “Task-driven Autonomous Agent” on March 28th, and then, on April 3rd, he open-sourced the BabyAGI project. It’s quite an elegant solution, really, focusing on just three agents: Task Execution Agent, Task Creation Agent, and Task Prioritization Agent. It’s an efficient and streamlined approach.

Here’s how it works:

Task Execution Agent: This one takes care of the first task on the list, getting things done promptly.

Task Creation Agent: This agent generates new tasks based on the objectives and outcomes of previous tasks, ensuring a smooth workflow.

Task Prioritization Agent: Lastly, this agent sorts the tasks, making sure everything is organized and prioritized correctly.

This process repeats itself in a well-orchestrated manner.

In a LangChain webinar, Yohei explained that he designed BabyAGI to resemble his personal work habits. He starts each day by addressing the first item on his to-do list and proceeded to the next task. As new tasks arise, he simply adds them to the list. At day’s end, he reevaluates and reprioritizes his tasks as needed. He applied this same methodology to the agent, creating a beautifully efficient system.

Side note, this entire project was created by a NON-programmer, using chatGPT to write the code for him. That’s pretty astounding, I write code, and I struggle somedays. It’s kinda astonishing that someone with zero experience can launch something like this in days using an AI assistant.

You can checkout the implementation and code here.

Project 3: AutoGPT

AutoGPT is basically like toddlerAGI. It doesn’t just scream, and cry for food, it runs around wreaking havoc on everything in its path.

AutoGPT uses a loop wherein it goes through steps of “recollection” to reason, generate new tasks, give self-feedback, and plan future actions, as well as ways to execute goals.

AutoGPT can actually execute many commands such as Google Search, browse websites, write to files, execute just about any python code (I highly recommend using Docker on this, so you don’t hose your system.). It can even spin up new agents with new personalities, responsibilities, and tasks.

As you start AutoGPT you will enter the AI’s name, role, and 5 goals. Along the way it’ll tell you everything it’s doing. It’ll stop and ask for permission to do tasks, or you can opt to run it in automatic mode (highly don’t recommend).

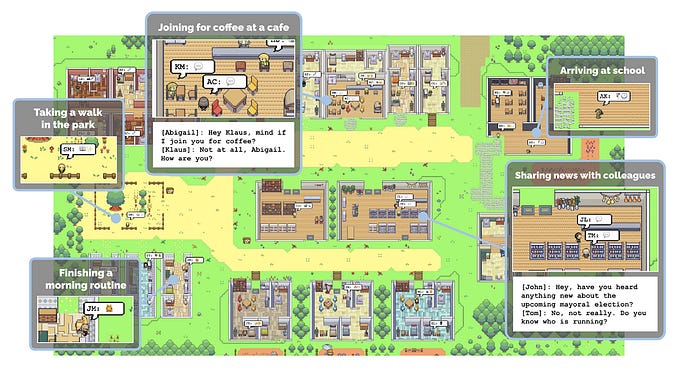

Project 4: Westworld Simulation

Researchers with Google and Stanford created an interactive sandbox where 25 generative AI agents could simulate human behavior. They exercise, go on walks, stop in for some coffee, and even plan dates and events. One of the agents got the idea to throw a Valentine’s Day party, and the other agents just autonomously started sending out invitations so everybody shows up, before that though many of them actually asked others to go with them to the party. This kinda makes me really question reality, because what’s the chance we’re in a simulation without even realizing? How would we know?

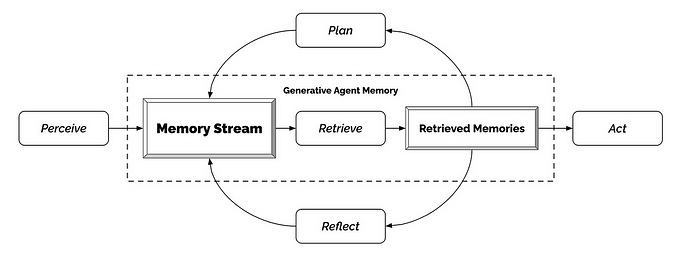

The keys to running this simulation are : Memory, reflection, planning. Which circles back to my original reason for writing this article. We can try and coax AI to do many things, run in loops, etc — but the real power comes from instilling in it, a more human-like memory system.

The stages of recreating “Westworld”

Stage 1: Memory and Retrieval

Each agent carries with it a stream of memories. Think of this like a chain with other chains branching out, but some links in the chain carry less importance than others. To retrieve the links that carry the most value there were three factors the researchers considered:

Recency: recent memory > older memories.

Importance: memories were more valuable IF the agent believed they were, or in other words if they held a stronger “resonance” with the agent as something they sensed as ‘true’.

Relevance: memories that relate to the current situation or task. For example when studying memories of schoolwork are generally more important than thinking about your “crush”.

Stage 2: Reflection.

To get the agents to reflect they were asked the following questions about the current state of their thought chain: “What are 3 most salient high-level questions we can answer about the subjects…” and “What are 5 high-level insighed we can ‘infer’ from the statements”.

Stage 3: Planning

Plans are stored in a memory stream, and are to be focused on multiple times, and entice the agents to create actions based on other plans. They can also update the plan according to observations about it in their memory stream.

The implications here are pretty astounding. Sophia Yang had an interesting observation on this, she says :

“Imagine an assistant who observes and watches your every move, makes plans for you, and even perhaps executes plans for you. It’d automatically adjust the lights, brew the coffee, and reserve dinner for you before you even tell it to do anything.” source.

This brings me full circle back to langchain. The developers have just announced that they will be implementing facets of these projects into langchain, first on a development branch, then after testing they’ll merge into the main one. Here’s some highlights, outline courtesy of chatGPT:

Recent surge in LLMs used as agents.

Projects: AutoGPT, BabyAGI, CAMEL, Generative Agents

LangChain community integrated parts of these projects with the following benfits:

Easy switching between LLM providers

Easy switching of VectorStore providers

Connectivity to LangChain’s tools

Connectivity to LangChain ecosystem

They are working on studying two categories of projects:

Autonomous Agents, with an emphasis on improved planning abilities.

Agent Simulations, by creating novel environments for simulations, and evolving more complex memory systems.

I highly recommend reading the blog post about the announcement.

CALL TO ACTION:

I’m looking for business partners, developers and investors especially to work on my own ideas on memory and build a startup in this space. Reach out to me at: patrickwcurl@gmail.com if interested.

References:

Camel

AutoGPT

BabyAGI

https://python.langchain.com/en/latest/use_cases/agents/baby_agi.html

https://python.langchain.com/en/latest/use_cases/agents/baby_agi_with_agent.html

“Westworld” simulation

Inspiration for this post: